Was California's Proposition 8 Election Rigged?

Related article: The complete report, Citizen Exit Polls in Los Angeles County: An In-Depth Analysis, by R. H. Phillips

INTRODUCTION

Was California's Proposition 8 Election Rigged?

By Sally Castleman and Jonathan Simon

This report presents evidence that in the November 2008 election the tabulation of the vote for California’s Proposition 8, the ballot initiative repealing marriage equality, was probably corrupted. It is beyond the scope of this study to know if any corruption was due to honest error or intentional fraud. Further investigation is warranted.

Much media attention has been focused on California over the past several years regarding gay marriage, abortion, and other hot-button social issues. In November 2008, two such issues appeared on the California ballot: Proposition 8 outlawed marriage equality (a “yes” vote opposed same-sex marriage); Proposition 4 mandated a waiting period and parental notification before non-emancipated minors were allowed an abortion (similar measures had been defeated twice before).

Election Defense Alliance, a national nonprofit group dedicated to restoring integrity and public accountability to the electoral processes, worked with several other election integrity groups to conduct public election verification exit polls (“EVEP”) in eight states in November. The polls were meant to validate or detect problems with the official vote counts. Ten sites, representing 19 precincts, were located in Los Angeles County, California. This paper presents the analysis of the L.A. County polling results as they pertain to Proposition 8.

I. The Need and the Method

The need.

Throughout history, elections have created opportunities for fraud, from massive outcome- determinative actions to small local shenanigans. Fraud has been perpetrated by the voters, the parties, election officials, programmers, technicians and others with access to the voting equipment itself. As governments have become larger, more powerful, and in control of more money, the motive for election theft has, if anything, increased. With the advent and proliferation of electronic, computerized, software-driven election equipment, the available means have increased dramatically and have become available to insiders, outsiders, and hackers even physically far removed from any voting or tabulating site.

Thus the need to validate or verify official results has become of extreme importance. If we, the citizenry, cannot know that the official election outcomes are valid, democracy is in serious jeopardy—this is equally true whether the election is occurring abroad in a third-world nation or here at home in the presumed “beacon of democracy.” When counting is done inside computers, the public can no longer observe that the votes are counted accurately. More and more people are coming to understand that with secret software controlling the counting and tabulating of our votes, the processes are no longer transparent and no one can be sure that the announced election results are accurate.

In the absence of verifiable election procedures and public accountability, one of the few remaining ways to determine whether an election has been properly counted is through exit polls. Exit polls are routinely used to verify election results in Europe and elsewhere, and through 2004 the mass media in the US conducted exit polls that were considered very reliable. Since 2005, however, the broadcast media no longer make their raw data from exit polls available to the public, and the “projections” announced on election night come from a proprietary mix of exit poll data and official count results.

In 2008 “official” exit polls, commissioned by the National Election Pool (NEP)—comprised of ABC, CBS, CNN, FOX, NBC and the Associated Press—were run by Edison Media Research and Mitofsky International, commonly referred to as Edison-Mitofsky, using a polling method very different from EVEP. Edison-Mitofsky takes only a small sample of voters as they are leaving a small sample of polling sites, initially stratify their raw results according to their best estimate of the electorate’s composition (an estimate based, at least in part, on exit polls from prior elections which have been adjusted to match the official results and thereby demographically distorted to the extent that those results were subject to mistabulation), and progressively adjust those results to match the official results as these become available through the course of the evening.

Thus, from the standpoint of verification, the released media exit polls represent polluted data. While they may shed some important light on the conduct of the election (for example, if the adjusted exit poll results are forced to present a highly unlikely or distorted demographic profile of the electorate), there is a need for independent exit polls that are designed specifically to verify official tabulations and take a different approach in order to do so.

The method.

In November 2008 two election reform groups, Election Integrity (EI) and Election Defense Alliance (EDA), conducted exit polls at 70 sites in 10 states. Their method, Election Verification Exit Polling, EVEP, had been developed by Professor Steve Freeman of EI, Dr. Jonathan Simon of EDA, and Professor Ken Warren, President of The Warren Poll. The protocol had been employed in two prior elections on a smaller scale – i.e., at fewer sites.

Unlike traditional exit polls that use sampling methods to predict wide geographic area results, EVEP measures only the accuracy of official results at any given polling site. The EVEP methodology is meant to answer, for each polling site where it is employed, the single question: “Were the votes from this polling site correctly counted?” In this way, much of the uncertainty that plagues media exit polls is avoided. With the EVEP system it is not necessary to find precincts representative of the jurisdiction as a whole, or to poll a representative sampling by gender, race, age or party affiliation, as long as the results can be weighted to yield a correct sample for that particular site.

Freeman calls the difference between exit poll percentages and the official tally for the given site Within Precinct Disparity or WPD.

The basic question asked in this paper is whether the WPD for the polled sites was within statistically-expected bounds and whether the detailed pattern of disparity suggests that the WPD resulted from a flaw in the exit polls or a flaw in the official vote count.

The protocol.

A one-page questionnaire is prepared, regarding several contests in each locale as well as basic demographic data. A “baseline race,” an election contest with an easily-predicted outcome, is included at each site for comparison during analysis. A questionnaire on a clipboard is handed to each voter as she or he exits the polling station. Voters are asked to participate by filling in the questionnaire privately and dropping it into a “ballot box,” thus ensuring anonymity. Volunteers are supplied forms on which to note those voters who are approached but choose not to participate. The “refusal” data includes gender, apparent racial background and approximate age. Gender, race, and age range are also filled in on the questionnaires by those voters who participate. Respondents are also asked to indicate party affiliation.

Multiple poll-takers work at every site throughout the entire day, beginning before the polls open, with the goal of reaching every voter. Training is mandatory and poll-takers are taught methods of randomization for use at any times that too many voters are exiting the poll site to maintain 100% approach rate. The training stresses the importance of randomization and neutrality.

The ballot box remains sealed throughout the day. To document full transparency, volunteers film the sealing of the box in the early morning, the transporting of the box to a public place for counting at the end of the day after the last voters have left, the opening of the box, and an example of the public counting process. At least two people count every vote.

The researchers.

Professor Steven Freeman teaches research methods and survey/polling design at University of Pennsylvania. He co-authored the book Was The 2004 Presidential Election Stolen? Exit Polls, Election Fraud, and the Official Count, analyzing the official exit polls and vote tabulations from the 2004 election.

Sally Castleman, outgoing National Chairperson of Election Defense Alliance, has extensive experience initiating, organizing and running projects both in the private and public sectors. She took responsibility for 37 of the polling sites, in eight states, creating systems and materials, conducting trainings, and overseeing the analysis.

Marj Creech advised and assisted with the entire EVEP endeavor. She had been involved in eight prior exit polls in five states, as a pollster, planner, leader, coordinator, and observer.

Judith Alter, Professor Emeritus at UCLA, Founder and Director of Protect California Ballots, led the polling efforts at the 10 sites in Los Angeles County, CA. This was Alter’s sixth time leading an exit polling initiative; likewise, many of her 110 site leaders and poll-takers had previous exit polling experience.

Dr. Jonathan Simon has a background in polling statistics. He has performed extensive analysis

of exit poll results from the 2004 and 2006 elections.

Ken Warren has polled for the media, government, private clients, and politicians over the last two decades.

The analysis of the data was done by Richard Hayes Phillips, PhD. A former professor, Phillips has extensive experience as an investigator, data analyst, writer and expert witness. In recent years Phillips is best known for spending three years obtaining and meticulously analyzing more than 125,000 ballots, as well as poll books and voter signature books, from the 2004 election for 18 Ohio counties, culminating in his seminal book Witness to a Crime.

Volunteers.

There were a total of 312 local leaders and poll-takers for the 37 sites that Castleman led. Twelve sites were in California. This report is focused on 10 of the California sites, all in Los Angeles County. (The 2 sites in Northern California, San Francisco County and Alameda County, used a questionnaire that did not lend itself to the analysis used in this paper.) Professor Alter, the 110 volunteers cited above, and another 20 volunteers who counted questionnaire responses all participated in the L.A. effort.

II. Results

Overview.

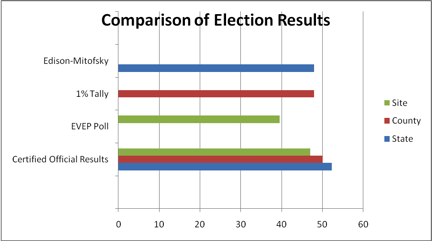

The initial results released by Edison-Mitofsky, immediately after the poll closings in California, presumably before any adjustments in the numbers were made to conform to outcomes (see section I, above), indicated a defeat of Proposition 8. The official election results from the Secretary of State's office, http://www.sos.ca.gov/elections/sov/2008_general/contents.htm, (and of course the final and conforming Edison-Mitofsky exit poll totals) declared Proposition 8 to have passed.

Edison-Mitofsky results for the state: 48% Yes - 52% No.

Election Day official results for the state announced the night of the election: 52.2% Yes - 47.8% No.

The final certified results for the state (29 days later): 52.3% Yes – 47.7% No.

Election Day official results for L.A. County: 50.4% Yes – 49.6% No

Random 1% tally results for L.A. County: 48% Yes - 52% No*

The final certified results for L.A. County: 50.04% Yes - 49.96% No.

Election Day official results for the 10 sites in L.A. County that were polled: 47.2% Yes - 52.79% No

The EVEP results in the 10 polled L.A. sites: 39.46% Yes - 60.54 No.

The final certified results for the 10 polled sites: 47% Yes – 53% No.

* California has a mandated random 1% hand tally, an “audit” of 1% of the precincts in each county. Fifty one precincts in L.A. County were included in the 1% manual tally; none were precincts included in the EVEP project.

III. Within Precinct Disparity (WPD)

Response rates.

The percentage of voters who responded to the EVEP polling request ranged from 38% (at a site where exit poll volunteers were harassed by poll workers and forced to stand beyond the legal location for an exit poll, far from the door) to 70%. The average response rate was 56.4%.

Proposition 8 WPD.

Nine of the ten L.A. polling sites had a higher percent of “No” votes than the official numbers. The disparities ranged from -2.3% to 17.7 %, a positive percentage indicating a higher percentage of “No” votes in the EVEP than in the official results. The average WPD or discrepancy between the official results and the polling results is 7.75%. As detailed in the paper, this is considered a significant disparity.

IV. The Analysis: Possible Reasons for Disparity Between EVEP and Official Results

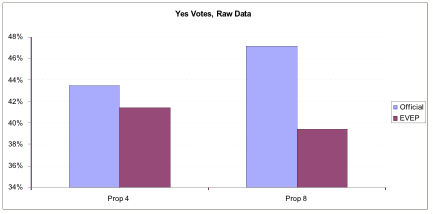

The four possible reasons to explain the large WPDs are discussed in the paper. For the analysis, Proposition 4, which mandated parental notification and a waiting period before a minor could undergo an abortion, was used as a benchmark against which the polling results of Proposition 8 are measured. Because the two are similarly controversial social issues, it was anticipated that the populace would for the most part split on Proposition 4 (abortion) similarly to Proposition 8 (gay marriage).

The citizens’ exit polling data for Proposition 4 was within 2% of the official results; 2% is considered within the margin of error. Furthermore, the disparities between polling data and official results for Proposition 4 in some precincts favored passage of Proposition 4 and in other locations favored defeat of the proposition. This is a natural pattern which provides further indication that the polling results indeed reflect the electorate’s in-booth choices.

The differential between the official results and the polling results for Proposition 8, however, was 7.75 %, far outside the margin of error.

Comparison of Official Results to EVEP Results for Propositions 4 and 8

Since the need for further analysis of Proposition 8 was thus indicated, the EVEP results were then adjusted for age, gender, ethnicity/race, and party affiliation, to compensate for any demographic group(s) being over- or under-polled.

The data indicates that the WPD for Proposition 4 narrowed to 0.64%, virtually spot-on, while Proposition 8 was still well beyond the margin of error at 5.74%.

Comparison of Official Results to EVEP Results for Propositions 4 and 8, with EVEP Data

Adjusted for Party Affiliation

V. Conclusion

While this EVEP analysis strongly suggests that the official results for Proposition 8 were compromised in the tabulation process, the impact of this paper extends far beyond that particular vote and far beyond California. Voting is the bedrock protocol of any democracy, fledgling or venerable, around the globe or here at home. If the vote-counting process is not observable, that bedrock turns rapidly to quicksand.

It is too late to change the official results of Proposition 8 but it is not too late to recognize the current vulnerabilities of computerized voting throughout the United States. Our election officials who have been entrusted with the responsibility to run transparent elections are not doing so; counting votes inside black boxes renders observation of the tabulation process impossible. Even the computer log books and the like are strictly off limits to examination. The candidates and the citizens cannot know that official election results are reliable.

Electronic election equipment remains in use despite persistent evidence of computer failures, election rigging and hacking, despite the control of our elections by equipment vendors with established partisan proclivities, and despite the revelations, based on exit polling and pre-election tracking polling, that results have consistently shifted in the same direction, always to the right. Because verification by observation has been precluded by computerization, only indirect or statistical methods of verification are available.

Thus, Proposition 8 results and the EVEP analysis by Election Defense Alliance offer another opportunity among many to discover what is amiss and to make urgently needed changes. An investigation is warranted into how the fraud or gross errors happened. This knowledge is necessary for running all future elections. While it is true that reforms have been made in California and elsewhere, it appears too many serious holes remain in the system.

The evidence, in this paper and elsewhere, is strong that computerized vote-counting cannot be trusted to support our democracy. Not only is further investigation warranted but a return to a fully observable vote-counting process is imperative. Our democracy will not survive if we cannot know that our election results are accurate and honest.

Executive Summary

The unexpected passage of Proposition 8 in California, banning same-sex marriage, has led election integrity advocates to wonder aloud if the official results were legitimate. Fortunately, two citizen groups, seeking to restore electoral integrity, Election Defense Alliance and Protect California Ballots, conducted exit polls in 12 California polling sites, 10 in Los Angeles County. The analysis of this data is presented here.

According to the official results, Proposition 8 passed overall in the state with 52.2% of the vote. In 10 L.A. county polling sites, representing 19 precincts, the official results were 47.2% Yes - 52.79% No, but the exit polls for the identical 19 precincts showed 39.46% Yes - 60.54% No. Is there a way to discern if the exit polling was flawed or the official vote count was compromised?

Proposition 4, requiring a waiting period and parental notification prior to an abortion, is clearly the most reasonable benchmark with which to compare Proposition 8, because both were “hot-button” social issues with heavily overlapping support among the electorate. Exit poll data bear this out. In the ten polling places in Los Angeles County where citizen exit polls were conducted, 66.63% voted for both propositions or against both propositions.

The official results and the exit poll results for Proposition 4 differed by only 2.06%, well within the margin of error, whereas Proposition 8, with a disparity of 7.75%, is far outside that margin. If the official votecounts were accurate and participation in the exit poll was influenced by the “politics” of those polled, we would expect a very similar effect on the results for the two Propositions, yielding very similar disparities rather than the very different disparities we found.?

Furthermore, the Proposition 8 disparity appears in nine of the 10 sites in the same direction; i.e., in 90% of the sites, the exit polling data show more votes against Proposition 8. One would expect that disparities should balance out – some in one direction, and some in the other. The very fact that this was the case in regard to Proposition 4 but not Proposition 8, together with the large difference in disparity between the two, suggests the official results for Proposition 8 are compromised.

There are four possible reasons for a large disparity between exit polls and official results:

(1) a basic flaw in the exit poll methodology;

(2) many voters lying on the questionnaires;

(3) a non-representative sample of voters responding; or

(4) the official results being erroneous or fraudulent.

As for possibilities 1 and 2, the constituencies for Propositions 4 and 8 were similar enough that it is hard to imagine a bias that would show up only in results for Proposition 8 and not in Proposition 4. The fact that the polling results accurately reflected the official count for Proposition 4 may be taken as validating both the vote count and the exit poll as conducted at the L.A. County sites.

As for possibility 3 above, the respondents actually were not a truly random sample of the voters, since some voters chose not to participate. To determine if this accounts for the large disparity, the full analysis goes further and adjusts the data for gender, race, age and party affiliation.

That is, the necessary changes are made to the polling data to assume the gender, ethnicity, age and party affiliation more accurately reflect the voting population for a given site.

Even with the data so normalized, the disparities remain too large to dismiss as random. The overall Proposition 8 disparity remains 5.74% (as opposed to 0.64% for Proposition 4 when normalized.) As seen on the bell curve below, the Proposition 8 results are still well outside the margin of error —a glaring red flag.

To answer possible criticism that it might not be accurate to apply the percentage of votes of the Republican poll respondents to those Republicans who voted but did not participate, the data is examined further. It shows that if our samples of Democratic, third-party, and Independent voters were representative of the electorate, then even if every non-participating Republican voter registered a Yes vote for Proposition 8, it would not have been mathematically possible to come out with the official results.

The only remaining explanation is possibility 4, the official results being erroneous or fraudulent. Further investigation is warranted, one that includes penetrating the proprietary secrecy behind which the voting equipment corporations have hidden their processes.

Furthermore, every candidate and every American should be alarmed enough to reject our current election conditions in which over 95% of our votes are counted electronically with no means of citizen oversight.

Our own cherished democracy—if it continues to count its votes in secret—is no more immune to anti-democratic manipulation than is the newest and shakiest democracy halfway around the world.